While at the Olympic Qualification Tournament in Berlin, I was chatting with a colleague who at one point made a comment about the large number of service errors after timeouts, and that coaches should take more timeouts in order to ‘force’ such errors. I have made this point before, here.** However, as new information has come to hand, I have modified my position, for example here. When I related this new information, my colleague was adamant that while it may be that sideout percentage remains the same, service errors are definitely higher after timeouts. Sadly, it didn’t occur to me until later that given that the object of a timeout is presumably to win a sideout, how you win the sideout (service error or otherwise) is actually irrelevant. But even as I maintained my (current) position that the value of timeouts is overrated and explained the effect of confirmation bias, my colleague refused to be budged from his contention.

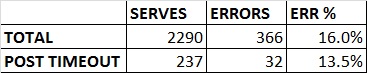

However, I had no proof at hand. So I decided to do a short study. I looked at my team’s matches from the current season. We have played 13 matches. Those 13 matches have produced 2,290 serves and 237 timeouts (including team timeouts and technical timeouts). While it is not a study of the whole league, I think the sample sizes are large enough to make a reality based observation (i.e. independent of confirmation bias) if not an actual fact.

So according my preliminary study, the probability of a service error after a timeout in the Polish Plusliga, is actually LESS after a timeout than in general play.

“But wait!!!”, I can hear you say. “These are professional players and their coaches tell them in every timeout not to make an error.” I can only speak for myself, but in the entire season I have never once mentioned service errors and timeouts in the same sentence. I have on three or four occasions asked a jump server to float serve, but never talked about errors. Maybe other coaches do talk incessantly about it.

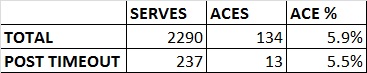

I was able to also calculate the effect of timeouts on aces on the same sample.

As the number of aces after a timeout is almost exactly the same as in general play, one could infer that the players are not making easier serves to avoid errors. It seems likely that the quality of the serve remains the same regardless of the timeout.

Of course, maybe you are right. Maybe it is different in your league. But you don’t actually know and you won’t know until you do the study. I look forward to hearing your results.

** I understand that the existence of this post could be construed as evidence as to why you should ignore this one, but let’s move one.

Nice study Mark! It would be nice to add the success of a serve after a timeout. I think its enough to take the average success of your serves for a comparison. If possible the averege servingsuccess of every single Player is nice to know. Then compare this value to the average success of a serving after a timeout. Including errors. this way it should be possible to evaluate the influence of a timeout on the success of serving. And the influence of psychological pressure and the interruption of the gameflow caused by a timeout.

Because i think that a professional Player is able to handle this Situation better, than a hobbyplayer by Not making a serving error. But it could bei possible to mesure the influnce by comparing the success of serving in a normal situation or after a timeout.

Cheers

Dominik

LikeLike

I have added the number of aces after timeouts.

You make a great point. Of course to get such individual results and for them to have reasonable sample sizes, you would need to study dozens of matches. I will happily share my raw data if you want to do that study 🙂

LikeLike

I’d be interested to dig* deeper into the data with you if you’re keen.

*Poor pun totally intended

LikeLike

Interesting post, but your samples sizes aren’t really large enough. The probability of error post-timeout is 13.5%, and the probability of error on non-post-timeout serves is 334 errors from 2053 serves, or 16.3%. If you throw those numbers through a chi-squared analysis you get a p-value of 0.26, so your sample size isn’t large enough to make a definitive conclusion about the effect of timeouts on error rate. (Also, if you want to be strict about it, it’s necessary to account for individual effects in the model, because you have repeated observations from a limited number of individuals, as Dominik points out).

LikeLike

Interesting post, but more data needed. The probability of error on a post-timeout serve is 13.5%; the error on a non-post-timeout serve is 334 errors from 2053 serves, or 16.3%. Throw these numbers through a chi-squared test and it gives a p-value of 0.26: not significant. So you don’t have a large enough sample to make any definitive statement about the effect of timeouts on error rate. (Also, strictly speaking, the model needs to take individual effects into account, because you have repeated observations on a limited number of individuals, as Dominik points out).

LikeLike

I am certainly not claiming that this study is statistically significant, just perhaps indicative.

However, as my personal hypothesis is that there is no difference between the serve after timeouts and not after timeouts, then your data supports my hypothesis 🙂

LikeLike

Returning to sample sizes. I realize that this is much more of a nerdy statistical direction than you were intending, but there’s some interesting material here for those of us with such leanings.

Asking whether there is any effect at all (of timeout on serve error) is in some senses not the right question to ask. Imagine for a moment that the effect (if it exists) is tiny (say, 0.1% higher service error rate after timeouts). An enormous amount of data would be needed to detect this. If you don’t detect an effect, does that mean that it’s not there or your sample size is too small to give you the power needed to detect it?

However, a 0.1% difference is of marginal interest because it would have little real effect on match outcomes. Perhaps a more productive question is whether the effect exists *at a certain effect size*. And given that, we can calculate the sample size needed.

The overall serve error rate from these data is about 16%, or roughly one in six serves. Let’s say we’d care if the effect size was 20% or more (i.e. service error rate after timeout at least 20% higher than normal service error rate, which would make it one service error in five serves or so). By my calculations, we’d need data from about 6000 serves in total (of which 620 would be post-timeout serves).

Now, all of that said: I think the much more interesting aspect of this whole discussion is to do with other interactions around timeouts and serving. As Anton pointed out, games happen in real time with real people, and an athlete’s performance and decisions during one point will be determined to some extent by the points that came before it. Timeouts arguably affect a team’s momentum, and so on. Any effect of timeouts on service errors is going to be mixed amongst a lot of other factors. Exploring these would be a very interesting exercise, if a suitably rich data set is available. Mark?

LikeLike

If you are asking whether I have access to a big data set, the answer is yes. If you are asking me if I can do the study, I can’t. I am happy to share the data if someone else wants to though.

In some sense the question is ultimately of momentum, specifically ‘does momentum exist?’. I would tend towards the side of no.

LikeLike

Hi Mark – I’d be keen (and able) to do some further analyses on your data. Ping me an email …

LikeLike

I think I made this point before, but you can’t compare the AVG with the point after a timeout.

you should compare the points before a timeout with the point after the timeout. Iam sure you will find the following:

Serving Errors before a timeout almost 0%, Aces before a time out way higher than after a timeout.

That’s why you are taking a timeout, to go back to normal / AVG or interrupt the serving run of the opponent which most of the time doesn’t included serves error but service aces 🙂

LikeLike

Firstly, the specific point of the ‘study’ is to question the contention / assumption / conventional wisdom / fallacy that there ARE more service errors after a timeout.

As to the general point about timeouts, you raise an interesting perspective. We can do the study as a thought experiment. Assuming normal conditions, the sideout percentage of the attempt before the timeout is 0%. The highest sideout percentage of the previous two attempts is at most 50%. Therefore you are completely correct that if the SO% after the timeout is greater than 50%, the timeout obviously increases your odds of siding out.

However, given that the odds of siding out are the same whether there is a timeout or not, NOT taking the timeout increases your chance of siding out by exactly the same amount.

Sure, if the coach takes the timeout, he ’caused’ the sideout. Except that the action he took did nothing to actually change the odds of success.

LikeLike

“However, given that the odds of siding out are the same whether there is a timeout or not, NOT taking the timeout increases your chance of siding out by exactly the same amount.”

No, I dont think so, because you are looking at the AVG SO% after a timeout, that is what your study is about. And how can you do the assumption, that the SO% is the same whether taking a time out or not, Iam sure nobody proofed that yet.

LikeLike

My ‘study’, such as it was, was about service errors after timeouts, not sideout percentage.

In one study that I know of, (referred to in https://markleb1.wordpress.com/2013/12/01/timing-timeouts/) the avg SO% after a timeout is almost exactly the same as the avg SO%. Hence my answer.

LikeLike

And now that I continue the thought experiment…

Given that service series are finite, logically the odds of siding out for each serve in a series should be progressively higher. If the sideout percentage is the same as the average, and the sideout percentage from the third serve is likely higher than the average, taking a timeout would REDUCE the chances of siding out.

I love thought experiments 😀

LikeLike

“The odds of siding out for each serve in a series should be progressively higher” – maybe, but not necessarily. Think of coin tosses – is the chance of getting four tails in a row lower than three tails in a row? Sure. But is the chance of getting tails *on the fourth toss* any different from the chance on any other toss? No, it’s always 50%. In theory this could be tested – if the probability of sideout was constant, then the service series lengths should show a characteristic distribution. But, even I think it’s unlikely that sideout probability is constant – volleyball would be a pretty boring to game to watch if it was.

LikeLike

just because something is finite, doesnet mean that over time the chances are increasing.

Life is finite, if Iam not married by the age of 50, I dont need to worry, because my chances are increasing finding the right wife by getting older.

I love thought experiments 🙂

LikeLike

You may be right, but my thoughts were that you (nearly) always sideout in the end because of the nature of the game. On the other hand, you don’t always get married, and the don’t always get the right wife.

LikeLike

I also disagree with Ben, from a statistical point of view he is absolutely right. He is arguing with the gamblers fallacy

But the gambler’s fallacy assumption is that the events (in his example the coin toss) are independent from each other. I think you cant say that in sport. An athlete’s performance is influenced by the past or previous actions.

LikeLike

I feel like you are about to tell me the ‘hot hand’ is real 😉

LikeLike

Hey Mark! I am a doctoral student and writing a paper with a colleague of mine using a large dataset from the male and female German Bundesliga. We have data on over 260 games from the scouts of all teams (including your old club) and we observe almost 38,000 services from over 240 player. Is it possible to contact you and share thoughts and opinions on the topic? We are also looking for the timeout effect on serving errors and we have interesting results. We would be happy to discuss them with a professional coach like you since it is a serious study with professional and advanced statistical methods. So if you are interested just mail me.

LikeLike